ImageNet Roulette was part of a broader project to draw attention to the things that can – and regularly do – go wrong when artificial intelligence models are trained on problematic training data.

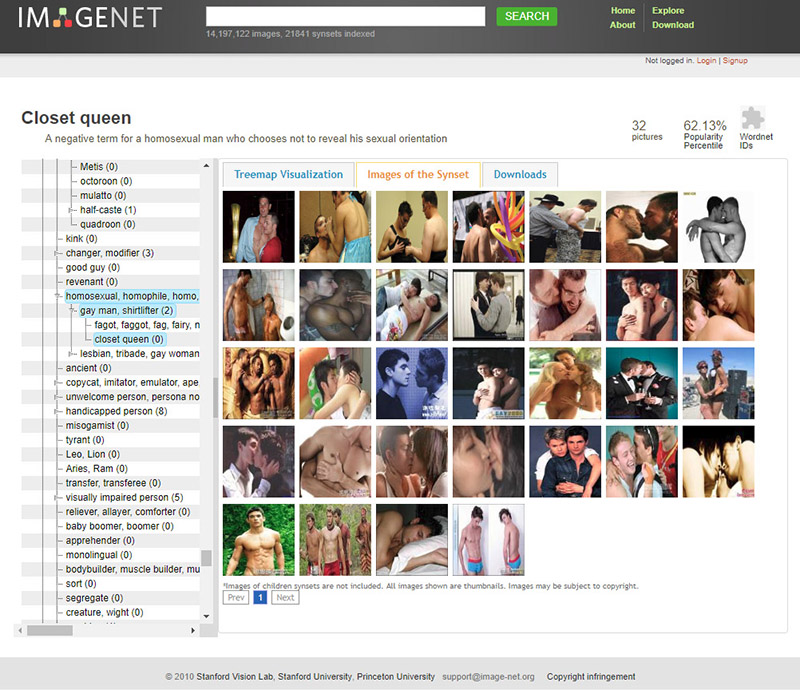

ImageNet Roulette is trained on the “person” categories from a dataset called ImageNet (developed at Princeton and Stanford Universities in 2009), one of the most widely used training sets in machine learning research and development.

The project was a provocation, acting as a window into some of the racist, misogynistic, cruel, and simply absurd categorizations embedded within ImageNet and other training sets that AI models are build upon. My project lets the training set “speak for itself,” and in doing so, highlights why classifying people in this way is unscientific at best, and deeply harmful at worst.

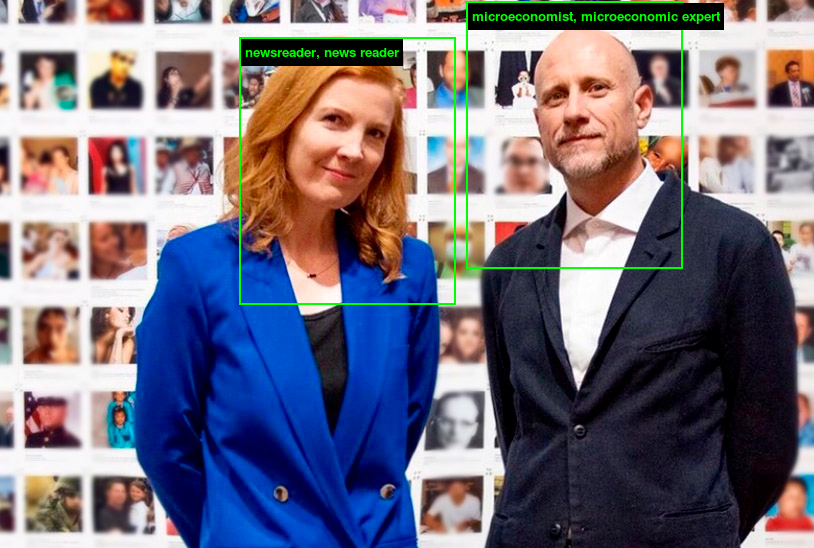

Excavating AI is my article, co-authored with Kate Crawford, on ImageNet and other problematic training sets. It’s available here.

ImageNet Roulette currently only exists as an installation in museums and galleries – its online incarnation has been taken down.